Agentic search moves you from optimizing for rankings and clicks to optimizing for AI-driven plans, actions, and measurable outcomes. Instead of keyword-to-SERP behavior, you shape structured intent signals such as constraints, budgets, and success criteria so that assistants can recommend and execute. You win by publishing agent-ready, quotable assets with clear methods, benchmarks, and citations that earn trust through corroboration. Measure influence via mention share, citation frequency, assisted conversions, and task completion—more tactics and governance follow.

Agentic Search: Definition and Why It Matters

Agentic search is a shift from keyword retrieval to goal-driven execution: an AI agent interprets your intent, plans multi-step actions, queries multiple sources, and returns a synthesized recommendation or completed task—not just a list of links. Your agentic definition: software that reasons, selects tools, and acts autonomously within guardrails to achieve a measurable objective.

For marketers, what matters is performance and leverage. You don’t just win impressions; you win inclusion in the agent’s final answer, shortlist, or action. That changes how you structure product data, proofs, and policies so agents can verify claims fast. You’ll prioritize machine-readable specs, pricing, availability, reviews, and compliance signals. You’ll also instrument outcomes—assistant mentions, downstream conversions, and task completion rates—so you can optimize for real customer intent, not clicks.

Traditional Search: The Baseline Model (Queries → Clicks)

In traditional search, you translate query intent into keyword targets, then compete for visibility through ranking signals that shape the SERP. You win attention when your result earns the click—CTR becomes your leading indicator of relevance and demand capture. You then tie those clicks to outcomes with attribution, so you can prove ROI and reallocate spend to what drives revenue.

Query Intent And Keywords

Why did traditional search become the default growth channel for so many marketers? You could translate demand into measurable clicks by mapping query intent to keywords, then budgeting against predictable volume and CPC. That clarity also supported brand safety and data privacy: you targeted declared needs, not inferred behavior, and you could audit where the spend went.

To win, you segment intent into navigational, informational, and transactional buckets, then build keyword sets that mirror each stage. You prioritize high-intent terms for revenue, mid-funnel questions for education, and branded queries for defense. You validate with search volume, CTR, conversion rate, and cost-per-acquisition, trimming waste with negatives and match types. In this model, keywords are your control surface; intent is the operating system driving outcomes.

Ranking Signals And SERPs

How do rankings actually turn intent into revenue? In traditional search, you win by aligning with the algorithm’s ranking signals: relevance, authority, content depth, freshness, and technical performance. You should treat these as measurable levers—map keyword clusters to pages, tighten entity coverage, earn credible links, and reduce latency. Your goal is simple: secure above-the-fold visibility where the market shops for answers.

But SERP dynamics complicate the baseline model. Features like local packs, snippets, videos, and shopping units reshape the competitive set and real estate by query class. You can’t optimize a page in isolation; you must optimize against the SERP layout you’re entering. As agentic signals emerge, your data stack must monitor volatility and feature presence, not just rank position.

Click-Through And Attribution

Where does the value of a top ranking actually land on your P&L? In traditional search, it lands after a click; you can count. You map intent (query) to exposure (impression), to action (CTR), to outcome (session, lead, revenue). That chain enables clean bid models, ROAS targets, and incrementality tests. You can segment by device, geo, and SERP feature, then optimize titles, schema, and landing speed to move CTR and conversion.

But even here, you face attribution gaps: dark social, cross-device, cookie loss, and assisted conversions dilute the last-click truth. As agentic journeys emerge, agentic misalignment can further sever clicks from value, so you’ll need sturdier MMM, server-side tagging, and holdouts to defend budget decisions.

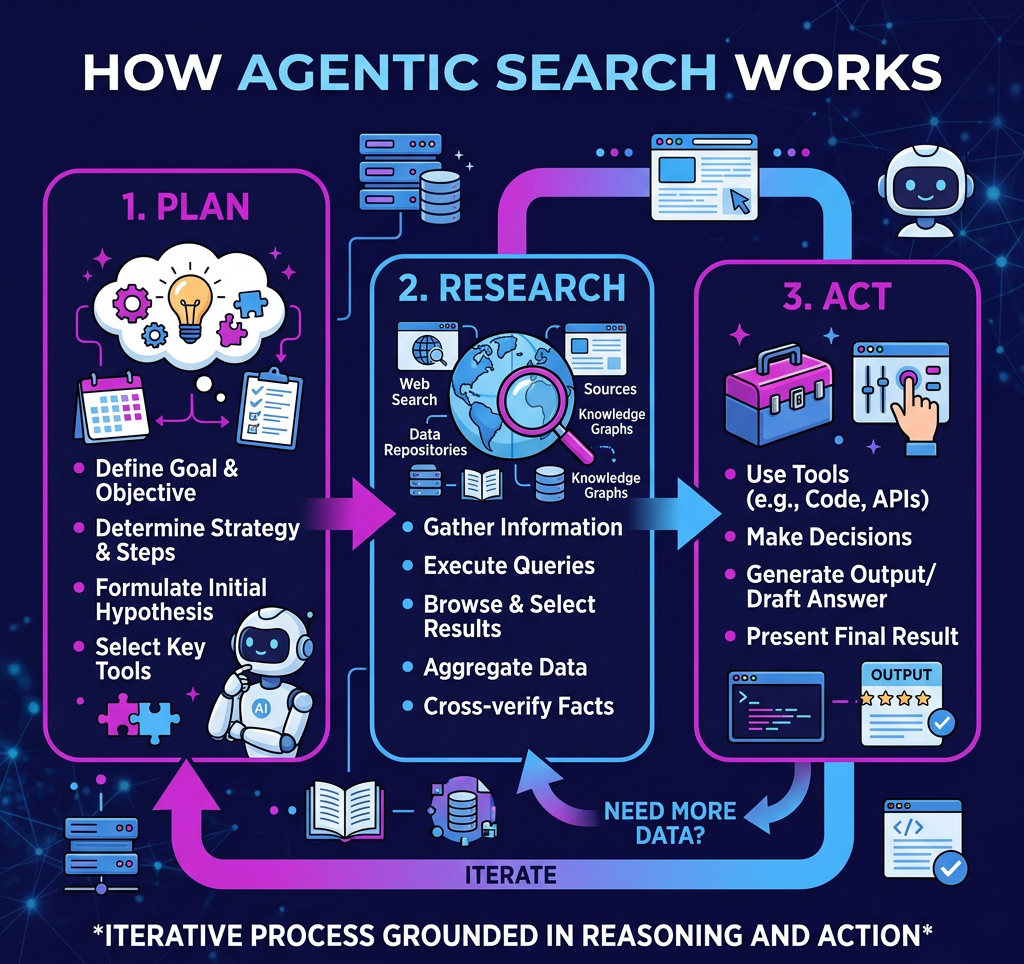

How Agentic Search Works (Plan → Research → Act)

In practice, agentic search runs less like a query-and-click tool and more like a managed workflow that moves from Plan → Research → Act. You start with agentic planning: you define the objective, constraints, KPIs, and acceptable risk, so outputs align to business outcomes, not just relevance.

Next, you operationalize research integration by pulling signals from SERP features, competitor pages, first-party analytics, CRM, and prior campaign results. The system reconciles conflicts, flags gaps, and ranks sources by freshness and authority, giving you an auditable evidence trail.

Finally, you act. You generate briefs, recommendations, and prioritized tasks, then route them into your stack—content ops, paid, or SEO—while monitoring performance and triggering iterative replans as data shifts.

Intent and UX: Agentic vs Traditional

Why does intent feel clearer in agentic search than in traditional search? Because you’re not just matching keywords—you’re steering a goal-oriented system that asks, infers, and iterates. Traditional UX optimizes clicks and pages; agentic UX optimizes outcomes and next steps, shrinking ambiguity and time-to-value. You’ll see intent expressed in structured constraints, preferences, and success criteria, which you can measure through completion rate, task time, and follow-up prompts.

- You capture richer intent signals: constraints, budget, context, and tolerances

- You reduce friction: fewer queries, fewer pogo-sticks, faster decisions

- You improve control: prompt engineering turns vague needs into executable tasks

Strategically, you shift from ranking-centric metrics to journey efficiency and confidence. That’s where innovation compounds for you.

Where Brands Appear in Agentic Search Results

In agentic search, you don’t just compete for clicks—you compete for inclusion in the assistant’s summary. Your brand shows up when the model names you as evidence in its narrative or selects you as a vendor in a recommendation set. That shifts your KPI from ranking position to share of mentions and selection rate, because those placements drive downstream consideration and conversion.

Brand Mentions In Summaries

Think of the summary as the new shelf space: agentic search often returns a synthesized narrative, and brands win visibility only if the model chooses to name them within that narrative. Your goal is to earn brand mentions that feel inevitable, aligned with the user’s intent, and in line with the summary tone the model adopts.

- Instrument “mention share”: track how often you’re named across target prompts, markets, and devices.

- Engineer citation gravity: publish uniquely quotable facts, benchmarks, and definitions that models can lift verbatim.

- Shape narrative fit: ensure your value props map to common summary frames (compare, explain, troubleshoot) with consistent terminology.

You’ll win when your brand becomes the cleanest shorthand for outcomes, not just a URL. Optimize for precision, not volume, and measure lift weekly.

Vendor Selection In Recommendations

When does agentic search stop summarizing and start picking winners? It happens when the system shifts from listing options to recommending a specific vendor, bundle, or next action. At that moment, you’re no longer competing for clicks; you’re competing for selection.

To influence recommendations, you need machine-readable proof: verified specs, pricing, availability, policies, and performance signals the agent can trust. Tight vendor onboarding matters—standardize product feeds, resolve identity and SKU conflicts, and publish service-level metadata. Pair it with strong data governance so your claims remain consistent across channels, updates propagate fast, and exceptions don’t poison trust. Track inclusion rate, rank share within recommendations, and “chosen” frequency in agent logs or partner dashboards. Then iterate: fix missing attributes, improve outcomes, and earn default status.

How AI Chooses Citations (and Assigns Trust)

Although AI systems can sound authoritative, they don’t “know” what’s true—they assemble answers by ranking sources for relevance, reliability, and corroboration. You influence that ranking by signaling provenance, consistency, and third-party validation across the web. Models score citation reliability using patterns like historical accuracy, alignment across independent references, and freshness relative to the query’s time sensitivity. When sources conflict, they’ll often privilege the cluster with stronger cross-citation density and clearer attribution, shaping perceived agentic trust.

- Measure how often your claims are repeated verbatim across credible domains

- Audit broken links, outdated stats, and authorless pages that reduce trust signals

- Track which publishers cite you, and whether those citations include data and methodology

Treat citations as a portfolio: diversify, document, and monitor drift over time.

Content That Performs in Agentic Search

How do you get your content picked up, quoted, and acted on by an agentic system—not just ranked? You design it to be executable. Agents favor assets that answer a task, expose assumptions, and reduce uncertainty fast. Lead with a clear decision, then provide evidence: methods, inputs, and constraints. Package outcomes in reusable units—tables, step-by-step playbooks, and summarized key points—so the agent can lift and apply them without rewriting.

You’ll outperform competitors when you pair authority with operability. Publish original benchmarks, define metrics unambiguously, and include audit-ready sources. Maintain tight data governance, so figures stay current and attributable. Build agency alignment by mapping content to user intents, risk tolerance, and approval workflows. When your content reduces effort and liability, agents choose it as the safest course of action.

Agentic Search SEO: What to Change Now

To win in agentic search, you’ve got to optimize for agent-ready answers, not just rankings. You’ll structure content into clear, extractable responses and back them with verifiable sources so agents can select you with confidence. At the same time, you’ll strengthen entity signals—consistent naming, schema, and authoritative references—so models reliably connect your brand to the topics that drive the pipeline.

Optimize For Agent Answers

Right now, agentic answers pull from multiple sources, synthesize a recommendation, and often complete the task—so your content has to earn selection, not just a click. You’ll win by packaging your expertise for extraction: clear agentic definitions, tight claims, and proof that an agent can safely reuse. Map pages to decision moments, not keywords, and drive user intent refinement with explicit inputs, constraints, and expected outputs.

- Lead with a one-sentence “best answer,” then support it with measurable criteria, benchmarks, and trade-offs.

- Publish task-ready artifacts: checklists, calculators, comparison tables, and step-by-step workflows with parameters.

- Instrument outcomes: track quote-rate in summaries, assisted conversions, and downstream task completion, then iterate weekly.

Design for synthesis, and you’ll capture demand before the click ever happens.

Strengthen Entity Signals

Why do agents trust one brand, product, or expert enough to cite it, rank it, and act on it? Because your entity signals resolve ambiguity at scale. You strengthen them by making your identity, expertise, and relationships machine-verifiable across every surface: Organization and Person schema, consistent NAP, owned profiles, citations, and authoritative backlinks. Tie products to SKUs, specs, pricing, availability, and reviews with structured data so agents can compare and transact. Publish verifiable proof: studies, benchmarks, methodology, and bylines that map to real experts. Reduce drift by standardizing naming conventions, logos, and canonical URLs. Don’t chase an unrelated concept for links; deploy a niche tactic instead: build a public knowledge base and get it referenced by industry datasets and tools.

How to Measure Impact When Clicks Disappear

Many agentic search experiences answer the question without sending a single click to your site, so your measurement model has to shift from traffic-centric reporting to influence-centric attribution. You’ll treat the agent as a new channel and use it to instrument outcomes downstream, not sessions. Start by establishing baseline demand, then run geo or audience holdouts to quantify incremental lift while respecting data privacy. Track visibility in agent responses via prompt testing, and monitor relevance drift by comparing cited entities, features, and language over time. Operationalize a measurement stack that ties exposure to revenue signals:

- Agent answer-share and citation frequency for your entities

- Branded search lift, direct visits, and sales-qualified conversations

- Conversion rate and LTV changes for users influenced by agent summaries

Report leading and lagging indicators together, weekly, with confidence intervals.

Agentic Search Risks: Brand, Accuracy, and Response Plans

Influence-based measurement tells you whether agentic search moves revenue, but it won’t protect you when the agent gets your story wrong. You need risk controls tuned for generative summaries, not blue links: brand safety filters, approved knowledge sources, and policy-aligned tone constraints across agents and tools.

Start with a quantified threat model: map high-stakes intents (pricing, legal, health, competitor comparisons) and set factual accuracy thresholds, escalation rules, and audit logs. Monitor answer drift with synthetic queries and human spot checks; track error rate, citation quality, and harmful association signals. When issues hit, activate a response plan: freeze unstable prompts, publish corrective canonical pages, push structured data, and brief comms and support with a single source of truth. Run postmortems weekly and iterate fast.

Conclusion

Congrats: you’ve spent years optimizing for “10 blue links,” and now your best customer is an AI that doesn’t click. In agentic search, you don’t win by ranking—you win by being cited, trusted, and actionable while the model plans, researches, and executes. Shift content toward clear facts, product data, and verifiable expertise. Track mentions, attribution, and downstream conversions, not vanity CTR. And yes, build a response plan—because hallucinations don’t respect your brand guidelines.