Crawl vs. Index: The SEO Difference

Where does SEO usually stall—at discovery or at eligibility? Crawling is Google’s fetch step: bots request URLs, render key resources, and log signals. Indexing is the decision step: Google canonicalizes, evaluates quality, and stores a version for ranking. You can get crawled without getting indexed, and you can rank slowly even when crawled frequently.

Audit the gap with data: compare server logs (bot hits), Search Console Crawl Stats, and the Indexing report. If crawl frequency is healthy but coverage is “Discovered—currently not indexed,” tighten canonicals, fix soft-404s, and reduce duplicate parameters. If indexation speed lags after updates, validate lastmod, internal links, and render-blocking scripts. Monitor crawl budget leakage via infinite faceted URLs and prune at the source.

Understand Crawl Budget (Demand vs. Capacity)

Even if your crawl-to-index gap looks clean, SEO can still stall when Google doesn’t fetch the right URLs often enough—this is crawl budget. You manage it by balancing crawl demand and crawl capacity, then validating changes with logs and Search Console.

Crawl demand rises when you publish frequently, change templates, or generate parameterized URLs; it also spikes when internal links push bots toward low-value pages. Crawl capacity depends on how fast and reliably your server responds, plus how many 5xx, 429s, redirects, and slow TTFB events Google hits. Audit access logs to quantify Googlebot hits by status code, response time, and directory. Then reduce waste: consolidate duplicates, block infinite spaces, fix redirect chains, and improve caching/CDN. You’ll free up budget for revenue pages without increasing the URL count.

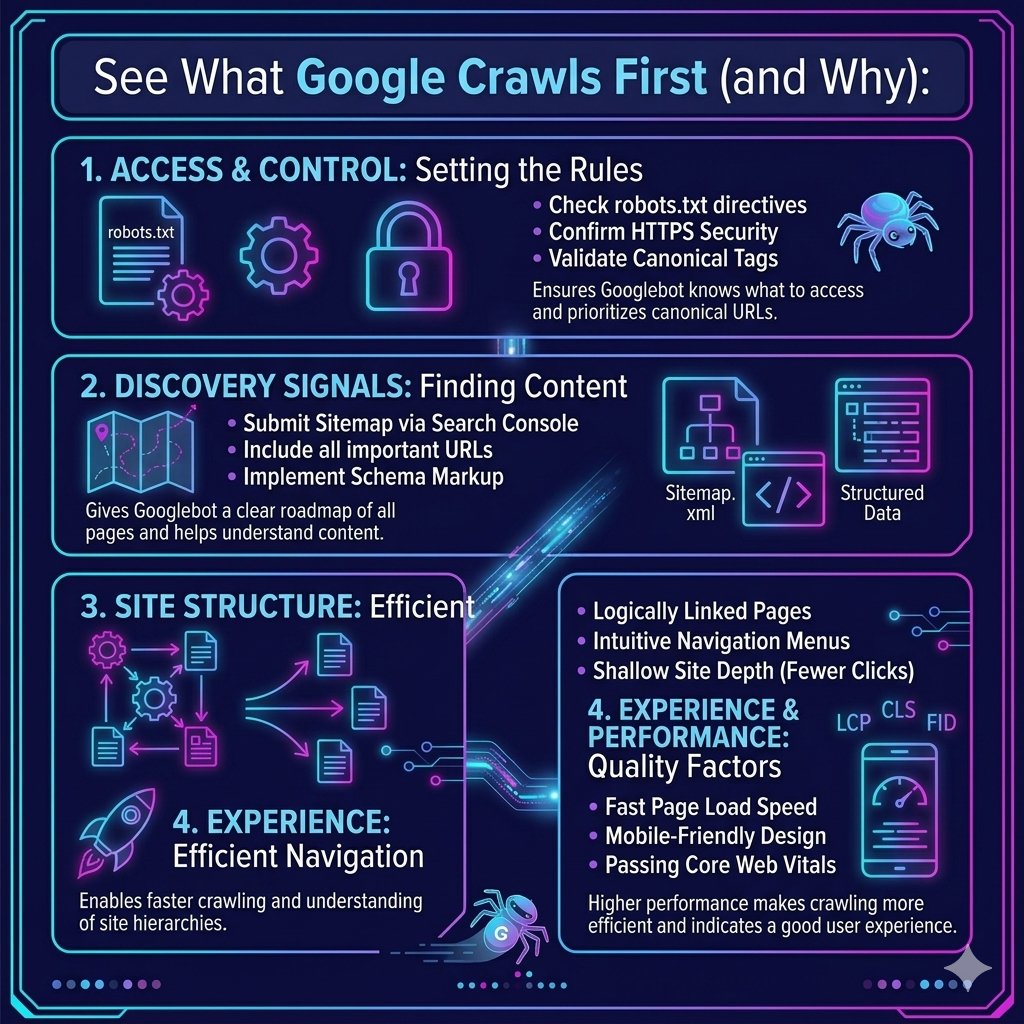

See What Google Crawls First (and Why)

To see what Google crawls first, you need to audit your logs and Search Console crawl stats to identify the exact URLs Googlebot hits most and earliest. Then map those hits to crawl priority signals—internal link depth, freshness, response codes, canonicals, and sitemap coverage—to explain the pattern. Once you know what’s winning crawl attention, you can re-route it toward key URLs by tightening internal linking, fixing redirects/404s, and pruning low-value parameter paths.

Crawl Priority Signals

How does Google decide which URLs get crawled first when your site has thousands competing for attention? It weighs crawl signals and priority signals that predict value, freshness, and discoverability. You can validate this by correlating server log hits with internal link depth, URL popularity, and change frequency.

Audit three levers. First, internal architecture: pages within 3 clicks of high-authority hubs typically earn earlier recrawls. Second, freshness cues: updated content, accurate Last-Modified/ETag headers, and consistent sitemaps reduce wasted fetches and accelerate revisits. Third, crawl efficiency: fast TTFB, clean canonicals, and low redirect chains prevent crawl budget dilution.

Implement: strengthen hub links to revenue pages, fix broken chains, and ship header + sitemap hygiene, then re-check log deltas weekly.

Key URLs Googlebot Hits

Next, map “first-hit” URLs to templates. If bots lead with 301 chains, 404s, or slow endpoints, you’ve got a crawl efficiency leak. Fix it fast: consolidate redirects, block junk patterns with robots.txt, add canonical tags, and push priority pages via internal links and updated sitemaps. Re-audit weekly and track shifts in first-hit share, median response time, and 2xx rate.

Stop Crawl Budget Waste: Redirects, Facets, Traps

Although Google’s crawl budget isn’t a hard cap for every site, your logs and crawl stats will show wasted fetches when bots loop through redirect chains, faceted URL permutations, and crawler traps like infinite calendars or session-parameter URLs. Quantify the impact: count 3xx hops per landing URL, measure median chain length, and flag pages that burn >5 fetches before a 200.

Then eliminate redirects waste by collapsing chains to single-step 301s, updating internal links to final URLs, and retiring legacy rules that trigger loops. For facets traps, inventory parameter combinations that generate near-duplicate content are enforced, and canonical targets and server-side constraints that cap permutations. Detect traps by clustering high-frequency crawl paths with low organic sessions and low indexation. Re-test after release: you want fewer bot hits and faster discovery.

Improve Crawling: Robots, Sitemaps, Internal Links

When your crawl stats show Googlebot spending time on low-value URLs, you can usually fix the bottleneck with three levers you control: robots directives, sitemap hygiene, and internal linking. Start with robots.txt tweaks: disallow parameter patterns, staging paths, and infinite calendars, then verify impact in Crawl Stats and URL Inspection. Next, harden sitemaps—ship only 200-status, canonical, indexable URLs, keep <50k per file, and refresh lastmod from your deploy pipeline so recrawl follows real change velocity. Finally, redesign internal links like an API: reduce clicks to priority pages, remove orphaned URLs, and consolidate navigation to fewer, stronger hubs. This is crawling vs indexing triage—your goal is faster discovery and smarter crawl allocation, not more pages, and tighter server load control.

Fix “Crawled, Not Indexed”: Canonicals, Duplicates, Quality

Why does Google crawl a URL and still refuse to index it? Usually, you’re signaling “don’t keep this” through duplicates, weak value, or conflicting canonicals. Audit clusters: parameter URLs, faceted pages, printer versions, and near-identical templates. Then fix canonical pitfalls: ensure each page points to itself or the true preferred version, uses absolute URLs, and matches hreflang, sitemap, and internal links. Don’t canonicalize to a URL that 301s.

Next, tighten your redirect strategy. Consolidate thin variants with 301s when they shouldn’t exist; otherwise, block generation at the source. Upgrade quality where consolidation isn’t possible: unique primary content above the fold, distinct titles, and non-boilerplate copy. Finally, reduce duplication by normalizing trailing slashes, casing, and UTM handling.

Track Crawl & Index Wins in Search Console + Logs

How do you know your crawl/index fixes actually worked—and didn’t just shift problems around? You validate with Search Console trends and server logs, then tie results to business impact and tracking costs. Start by setting a baseline week, then compare post-release deltas.

- Search Console audit: Check *Crawl stats* for crawl frequency, response codes, and average download time; confirm spikes align with priority URLs, not parameter junk.

- Indexing proof: In *Pages*, measure declines in “Crawled, not indexed,” growth in “Indexed,” and faster “Discovered → Crawled → Indexed” timelines for key templates.

- Log-file reality check: Verify Googlebot hits your canonical URLs, reduces 3xx chains, and stops hammering thin/duplicate endpoints.

Ship a dashboard, alert on regressions, and iterate weekly.

Frequently Asked Questions

How Does JavaScript Rendering Affect Crawling Versus Indexing for Single-Page Apps?

JavaScript rendering lets bots fetch your SPA, but delays indexing until they execute scripts and see real content. If rendering performance is slow, Google’s second-wave rendering queues your pages, and you’ll audit gaps between fetched URLs and indexed URLs. If JavaScript hydration fails, bots may index empty shells or partial DOM. Implement SSR or dynamic rendering, ship critical HTML, pre-render routes, and monitor logs plus coverage reports.

Can Server Response Codes in CDNS Delay Indexing Even if Pages Are Crawled?

Yes—CDN server response codes can delay indexing even when you’re crawled. If your edge returns intermittent 5xx, 429, or soft 404 responses, or inconsistent 200/302 chains, Google may defer indexing until signals stabilize. Audit logs by bot user-agent, correlate CDN latency and status spikes, and sample rendered HTML. Fix with origin shielding, consistent cache rules, correct canonical/redirects, and rate-limit exemptions for crawlers. Re-test via GSC URL Inspection.

How Often Should I Resubmit Sitemaps After Major Site Migrations or Replatforming?

Resubmit your sitemaps immediately after launch, then again 24–72 hours later, and weekly for 4–6 weeks. Picture your new site like runway lights snapping on in fog—you guide bots fast, then confirm alignment. Set a sitemap cadence based on audit signals: index coverage deltas, crawl stats, and 3xx/4xx spikes. Match migration timing to release waves; resubmit after each major URL batch, not daily.

Do Noindex Tags on Paginated Pages Impact Product Discovery and Rankings?

Yes—if you do noindex on paginated pages, you can reduce product discovery and weaken rankings, especially when deeper SKUs only appear on page 2+. Audit logs and Search Console: count impressions/clicks for paginated URLs, map products to the first page they appear on, and measure internal link depth. If coverage drops, keep indexable pagination or add “view-all,” improve faceted linking, and ensure canonicalization doesn’t hide inventory.

What Role Do Core Web Vitals Play in Crawl Efficiency and Indexing Speed?

Core Web Vitals don’t “bribe” Google to crawl you faster, but if your pages load like a fax machine, you’ll tank crawl efficiency and indexing speed via slower rendering and more timeouts. Measure LCP, INP, and CLS alongside TTFB, render delay, and 5xx rates in logs. Fix by cutting JS, enabling caching, prioritizing critical CSS, and reducing redirects. Impact: more URLs processed per crawl. Discussion ideas: CWV vs render budget.

Conclusion

You can’t rank what Google can’t index—and Google can’t index what it doesn’t crawl. When crawl budget leaks into redirects, facets, and traps, your best pages sit invisible. When you tighten robots rules, clean sitemaps, and strengthen internal links, priority URLs surface faster. If you’re stuck with “Crawled, not indexed,” audit canonicals, duplicate clusters, and thin content signals. Then prove impact: compare Search Console coverage, crawl stats, and log-file hit rates before and after changes.