Multi-Location SEO: How to Track Google Rankings Across Service Areas

Most SEO professionals look at rankings as a single national metric. That assumption is quietly destroying the accuracy of their campaigns. Google serves different results depending on where the user physically is, down to the city, district, and, in some cases, even the neighborhood. If you are optimizing a local service page or running geo-targeted ad campaigns, a ranking report pulled from your office in Berlin tells you nothing meaningful about what users in Munich or Hamburg are actually seeing.

The technical reason for this is straightforward: Google personalizes SERPs using the IP address of the requesting client. An IP in a Frankfurt data center will pull Frankfurt results. A query routed through a residential IP in Cologne will return Cologne results. Without the ability to simulate queries from specific physical locations, rank tracking becomes educated guesswork rather than measurement.

This guide walks through the full technical stack required to track Google rankings by location accurately – from how geo-targeting works at the protocol level to building a scalable monitoring setup that can handle hundreds of locations in parallel.

Why Standard Rank Trackers Give You the Wrong Data

Commercial rank tracking tools – Semrush, Ahrefs, Moz, Serpstat – all face the same fundamental constraint: they send queries from their own infrastructure. That infrastructure is geographically concentrated, typically in US or EU data centers, and Google has learned to identify it. The result is one of two failure modes: either the tool receives generic, non-localized results, or Google detects the automated query pattern and returns degraded or blocked responses.

The second failure mode is subtler. Some tools claim to support local rank tracking by appending location parameters to the Google Search URL (for example, the &gl= or &near= parameter). These parameters provide a signal, but they do not override IP-based geolocation. Google’s ranking algorithm places far more weight on the IP’s physical location than on URL parameters, particularly for queries with local intent. The discrepancy between parameter-based and IP-based results can be significant, often five to fifteen positions for competitive local keywords.

To get accurate location-specific rankings, the query must originate from an IP address that Google associates with the target location. This requires a proxy infrastructure with genuine geographic coverage.

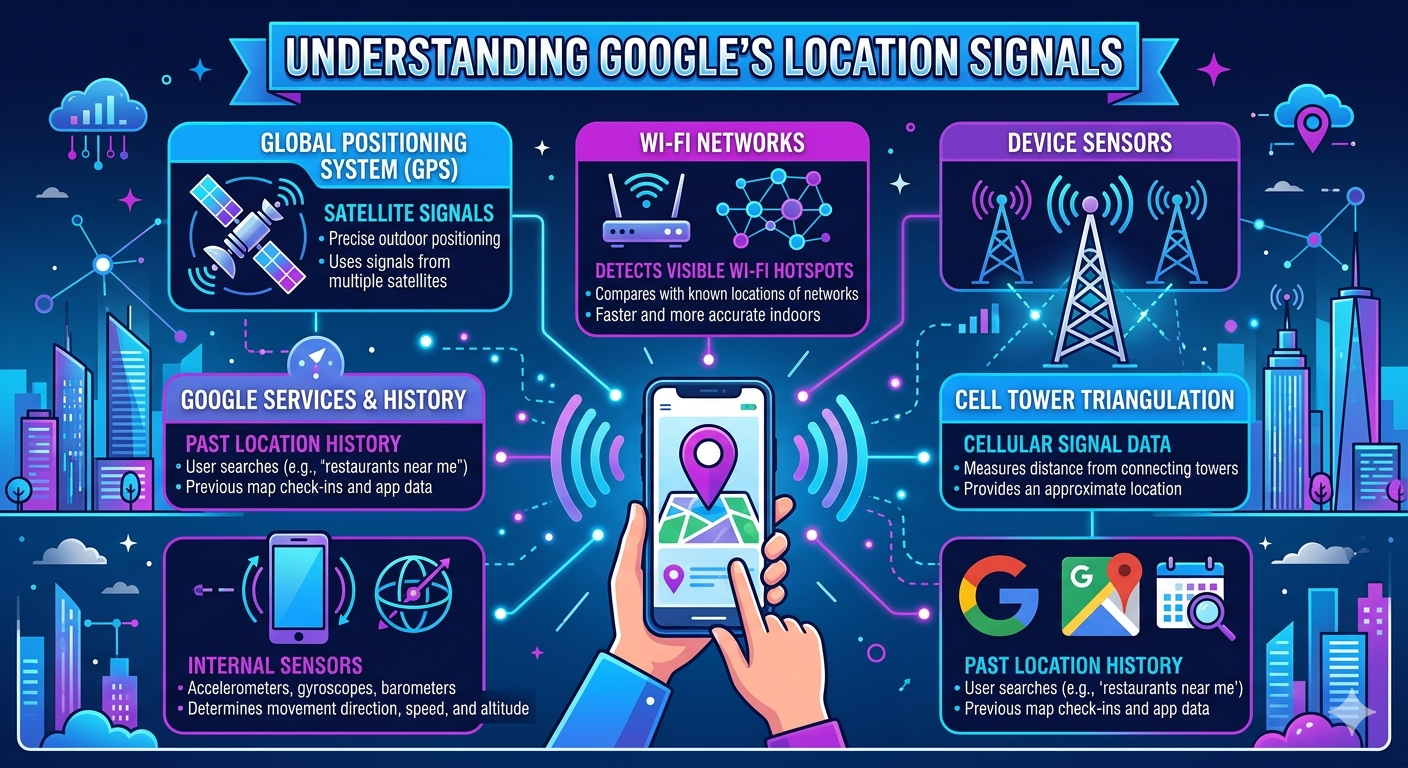

Understanding Google’s Location Signals

Google uses several signals to determine the searcher’s location, and understanding their relative weight helps you build a monitoring setup that actually replicates user experience.

The IP address is the primary signal. Google maintains an extensive database mapping IP ranges to geographic locations. Residential IPs are generally mapped at the city level or more precisely. Data center IPs are typically mapped at the country level, which is why data center proxies produce less accurate localization than residential ones for city-level rank tracking.

The second signal is the Google domain. A query to Google.de implies a German user, regardless of IP, but this signal is weaker and can be easily overridden by IP geolocation. The third signal, when applicable, is the device’s GPS data via the browser Geolocation API – but this applies only to browser-based searches, not to programmatic API queries or headless browser setups.

For rank tracking purposes, controlling the IP is what matters. A residential proxy IP from a specific city will cause Google to serve that city’s local pack, localized organic results, and location-adjusted featured snippets.

Choosing the Right Proxy Type for Location-Based Rank Tracking

Proxy Type Comparison for Geo-Rank Tracking

| Proxy Type | Geo Precision | Detection Risk | Cost (Per IP/mo) | Best Use Case |

| Data Center IPv4 | Country level | High | $1.40–$3.60 | National rank tracking |

| Shared IPv4 | Country/region | Medium-High | $0.67–$1.47 | Low-volume monitoring |

| Residential IPv4 | City/district | Low | $3.60+ | Local SEO, city-level SERP |

| Mobile IPv4 | City level | Very Low | Variable | Mobile SERP simulation |

| Dynamic Proxies | Rotational | Very Low | $0.27+ | High-volume crawling |

For most local SEO monitoring tasks, residential proxies produce the most reliable geo-signal. Mobile proxies are valuable when you specifically need to track mobile SERPs, since Google’s mobile index can differ substantially from desktop results in certain verticals. Dynamic proxies work well for high-volume scraping scenarios that require rotating IPs at scale without managing individual proxy assignments.

Step-by-Step: Setting Up Location-Based Rank Tracking

Step 1 – Map Your Target Locations to IP Requirements

Start by building a location matrix. For each target market, identify the city-level granularity you need. A national e-commerce site might need 5 to 10 proxies in major cities. A local services business with multiple branches needs one residential IP per service area. Document the country, city, and whether you need separate mobile and desktop tracking for each location.

Step 2 – Acquire Geo-Targeted Proxy Infrastructure

Selecting a proxy provider with genuine residential coverage across your target locations is the most important infrastructure decision you will make. Proxys.io/en offers dedicated IPv4 proxies across more than 25 countries, including the US, UK, Germany, France, Australia, Brazil, and India, with residential options available for precise city-level geo-simulation. Each IP is assigned exclusively to one user, eliminating the shared-IP reputation problems that consistently contaminate rank-tracking data.

Step 3 – Configure Your Scraping or Rank Tracking Tool

The proxy must be assigned per query, not per session. A single scraping session that reuses the same residential IP address across multiple cities will result in inconsistent geo-targeting. The correct architecture is: one proxy IP per target location, with queries routed exclusively through that IP for the corresponding location’s data collection.

For Google Search scraping, use HTTPS proxies with authentication. Set the request headers to match a realistic user agent for the device type you are simulating (desktop Chrome or mobile Safari). Include the appropriate Accept-Language header for the target market – a proxy in France with an English Accept-Language header will return anglicized results that do not reflect what local users see.

Key headers to configure: User-Agent should match a current browser version for the target platform, Accept-Language should be set to the target locale (e.g., fr-FR for France, de-DE for Germany), and the Google domain should match the target country’s TLD.

Step 4 – Query Structure and Parameter Configuration

Construct your Google Search URLs carefully. For local intent queries, include the location name in the query string only when that is how real users search. Do not artificially append location modifiers to queries that users typically search without them – this distorts the SERP you are measuring. The geo-targeting should come from the proxy IP, not the query string.

Use the hl= parameter to set the interface language and the gl= parameter to set the country. Both should match the proxy location. For example, a proxy in Spain should have gl=es&hl=es. This combination produces the most authentic local SERP simulation.

Step 5 – Data Collection Cadence and Anti-Detection

Google rate-limits scraping aggressively. Queries that come too fast from a single IP trigger CAPTCHA challenges or temporary blocks, even with residential IPs. A safe cadence for residential proxies is one query every 15 to 30 seconds per IP. For higher volume, scale horizontally – more IPs rather than faster queries per IP.

Rotate your user agents alongside IP rotation if you are using dynamic proxies. Consistent user agent strings serve as fingerprinting signals when combined with rotating IP addresses. Randomize request timing slightly to avoid mechanical intervals that automated detection systems identify.

Interpreting Location-Specific Ranking Data

Common Discrepancy Patterns and Diagnostic Actions

| Observation | Likely Cause | Diagnostic Step |

| Rankings vary by 10+ positions between cities | True local SERP variation | Verify with a manual search from the same IP |

| All locations return identical results | IP not being geo-resolved | Check proxy geo with ipinfo.io before querying |

| CAPTCHA appearing frequently | Request rate too high | Increase the delay between queries |

| Results show a generic national pack | Data center IP used instead of residential | Switch to a residential proxy for the target city |

| Ranking jumps inconsistently | Shared IP contamination or IP reuse | Switch to dedicated residential IPs |

When you see wide ranking variations between cities for the same keyword, that is not noise – it is a real signal. Google’s local algorithm weighs the business’s proximity to the searcher, prominence signals from local citation sources, and behavioral signals from that specific market. A page ranking third in London may rank twelfth in Manchester for the same keyword because the competitive landscape is different and the business has stronger local signals in one market than the other.

Understanding these variations is only possible when your measurement infrastructure actually reflects them. Tools that flatten this data into a single national average are hiding the most actionable information in your rank tracking dataset.

Scaling to Multi-Location Monitoring

Once the single-location setup is working cleanly, the architecture scales by adding proxy IPs for each additional location and parallelizing collection. A monitoring system covering 50 cities with daily keyword checks across 100 keywords requires 5,000 queries per collection cycle. At one query per 20 seconds per IP, this requires roughly 28 IP-hours of capacity – achievable with a pool of 5 to 10 residential proxies running in parallel.

For teams managing SEO across multiple markets simultaneously, understanding how to configure proxies for automated scraping workflows is foundational. The technical setup for rank tracking shares significant overlap with broader web scraping proxy configuration best practices, including connection pooling, error handling for blocked requests, and managing IP reputation over time.

At scale, the most common failure point is IP reputation degradation. A residential IP that has been used heavily for scraping accumulates signals that Google uses to downgrade its trustworthiness. Monitor your block rate per IP – if a specific IP starts returning CAPTCHA more than 5% of the time, rotate it out of your primary tracking pool.

What Accurate Location Data Actually Changes

The practical value of geo-accurate rank tracking compounds quickly. Ad teams can cross-reference paid keyword bids with organic ranking data per city – reducing spend in markets where organic coverage is already strong. Content teams can identify cities where a page ranks 11th or 12th, where targeted optimization efforts will have the highest ROI. Local landing page performance becomes measurable against actual search behavior in each market rather than proxy metrics.

More importantly, geo-accurate rank tracking catches Google’s regional algorithm updates before they appear in aggregated tools. When Google updates its local pack algorithm or adjusts how proximity signals are weighted, the effects show up in location-specific data days before they become visible in national averages. That early warning window is where competitive advantage gets built.

Conclusion

Tracking Google rankings by location is not a feature request – it is a measurement requirement for any SEO program operating in markets with local intent. The technical components are not complex: residential proxies with genuine geo-resolution, correctly configured query headers, and a scraping cadence that avoids triggering rate limits.

The constraint is always infrastructure quality. Data center proxies produce country-level geo-signals at best. Shared IPs introduce contamination from other users’ query patterns. Dedicated residential proxies, assigned one per location and configured correctly, are what close the gap between what your rank tracker reports and what users in that city actually see when they search.

Build the measurement foundation correctly before investing in optimization. Everything downstream – content decisions, local citation building, ad budget allocation – depends on data that only geo-accurate rank tracking can provide.